Professor Michail Antoniou

Chair in RF Sensing Systems

Profile of Dr Michail Antoniou, Electronic, Electrical and Systems Engineering, University of Birmingham

The increased prevalence of drones is a huge concern for safety and security of sites such as airports, classified areas, protected areas, and large gatherings of people, such as festivals.

Radar is able to reliably and accurately track drones in real-time and provides an all-day all-weather solution to identifying the presence of drones, and to protect against accidental or malicious intrusions.

However, the detection and tracking of drones by a radar is complicated by the small radar cross-section of the drones, as well as their low altitude and low velocity flight characteristics

Additionally, drones occupy the same low altitude airspace as birds and have similar flight characteristics, such as velocity and height, as well as a similar radar cross section.

Therefore, for reliable airspace monitoring using radar, it is essential to be able to distinguish between birds and drones.

Chair in RF Sensing Systems

Profile of Dr Michail Antoniou, Electronic, Electrical and Systems Engineering, University of Birmingham

Assistant Professor in Ornithology and Animal Conservation

Dr Jim Reynolds has worked on the reproductive biology and the nutritional ecology of birds from many different and diverse orders including passerines, geese, grouse, kingfishers and terns.

Professor of Biogeography

Professor Jon Sadler is a biogeographer and ecologist researching population and assemblage dynamics in animals and occasionally plants.

Research Fellow

Staff profile for Mohammed Jahangir

Post Doctoral Research Associate

Dr Joe Wayman researches questions around moorland management within the UK and the impacts on biodiversity and wildfire risk.

Dr George Atkinson

(Alumni)

Daniel White(Graduated, for current work see Distributed and Multi-Static Radar)

Holly Dale(Alumni)

The Mapping and Enabling Future Airspace (MEFA) research program is designed with the goal of assessing the 3D staring radar for the detection and tracking of individual birds, individual birds in small groups, and trajectory behaviours in large flocks. This is in collaboration with colleagues at the Schools of Geography, Earth and Environmental Science, as well as the School of Biosciences at the University.

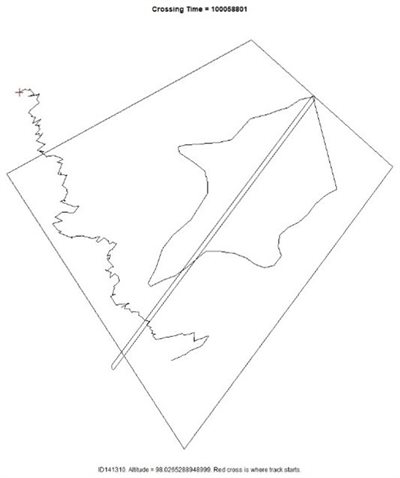

In order to understand the radar signatures of birds, and to begin building a dataset of control bird targets for classification, an experiment was conducted in which birds of prey were flown by professional handlers from the International Centre for Birds of Prey (ICBP). Four birds with differing flight behaviours were flown, each equipped with GPS tags to provide accurate location data which could be correlated with the tracker output of a staring L-Band radar.

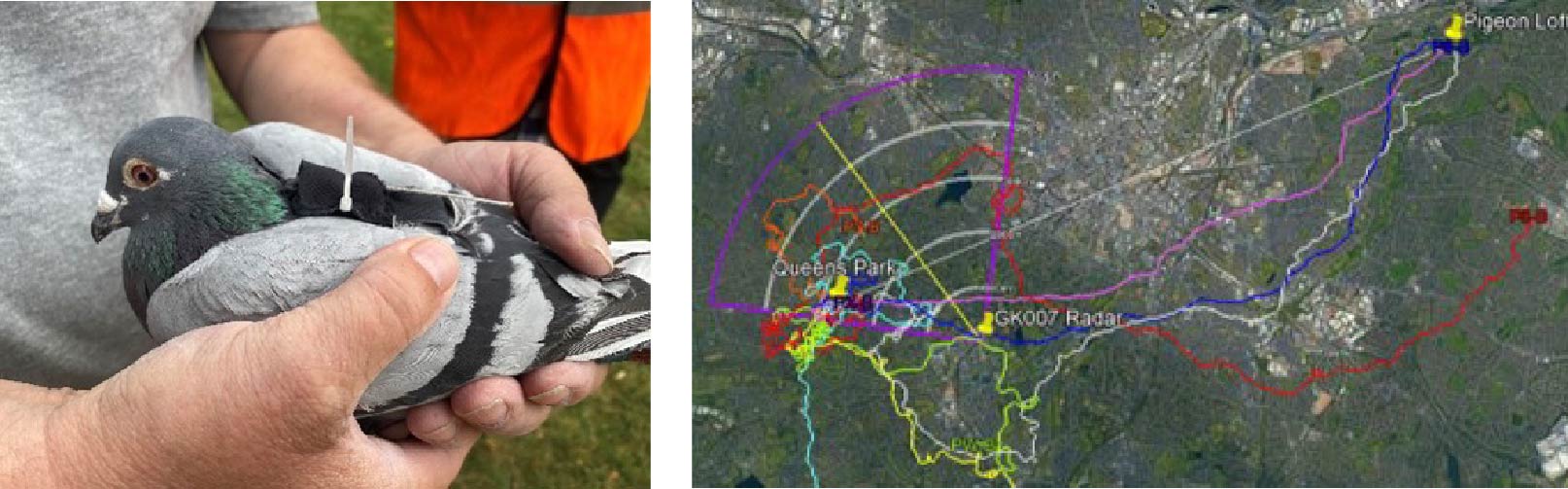

In addition to birds of prey, a similar experimental trial was conducted in which racing pigeons, equipped with GPS tags, were flown from a release location in the field of view of the radar back to their loft. These pigeons were detected and tracked by the radar, and the resulting spectrograms can be used to further develop classification algorithms.

As well as the measurement of control bird targets, in which the identification and GPS location is known, the MEFA project has collected an enormous catalogue of detection and tracking data for opportune birds, every day the radar is operational, throughout the year. In order to label some of this data we developed a line transect method in which an observer records the time and position of an identified bird crossing a virtual line. With this method, and together with the tracks produced by the radar over the seasons, ecologists will be able to produce a measure of the biomass of birds in the field of view of the radar.

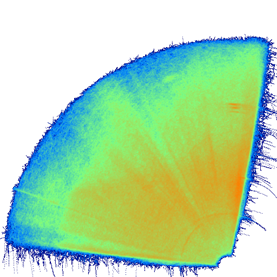

The trajectory characteristics of the known bird targets can also feed into the classification of different bird species observed by the radar. To characterise the many tracks produced by the radar over long time periods, we developed a tool to generate heat maps of activity. These heatmaps can be filtered by time as well as track characteristics such as height and velocity. This allows us to build up a picture of urban bird activity and study how the activity changes over time.

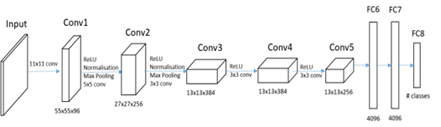

In order for drone surveillance with radar to be effective, systems need to be able to distinguish between drones and birds. In recent research, machine learning techniques using convolutional neural networks have shown great promise in their ability to classify between drones and birds with high accuracy.

We have developed a CNN classifier, trained on spectrogram images of drones and birds acquired from the radars at our advanced network radar facility (link here). The drone spectrograms were constructed from measurements of four different kinds of quadcopter drone, with varying sizes of body and propellers. The classification accuracy of the CNN for distinguishing drones from birds is 92.5%, with a false positive rate of 5.4% of bird spectrograms classified as drones.

It was found that classification performance of the CNN is highly dependent on the presence, or lack thereof, of micro-Doppler components in the spectrograms, which have been shown to originate from the rotation of the propellers of the drones. The strength of these micro-Doppler components is correlated with both the distance from the radar, as well as the size of the drone propeller blades. Therefore, smaller drones or ones at further distances from the radar are more likely to be classified as birds.

Part of the difficulty of using neural networks for classification is the large datasets of images required. So far, we have produced a large dataset of control drone target spectrograms as well as opportune bird target spectrograms.

For drones, micro-doppler returns originate from the rotational motion of the rotor blades and appear alongside the body return of the drone. These returns appear as a repeated set of lines within the spectrograms of these targets.

One way we can increase the dataset of drone spectrograms is to simulate the radar return from a rotating target. The developed simulation uses measured rotor propeller rotation rates and incorporates real noise measured from the staring radar to produce a spectrogram which matches very closely to the spectrograms of real drone targets and can then be used to bolster the training and testing datasets used for classification.